Our paper "Global Vegetation Modeling With Pre-Trained Weather Transformers" has been accepted at ICLR24 TCCML Workshop

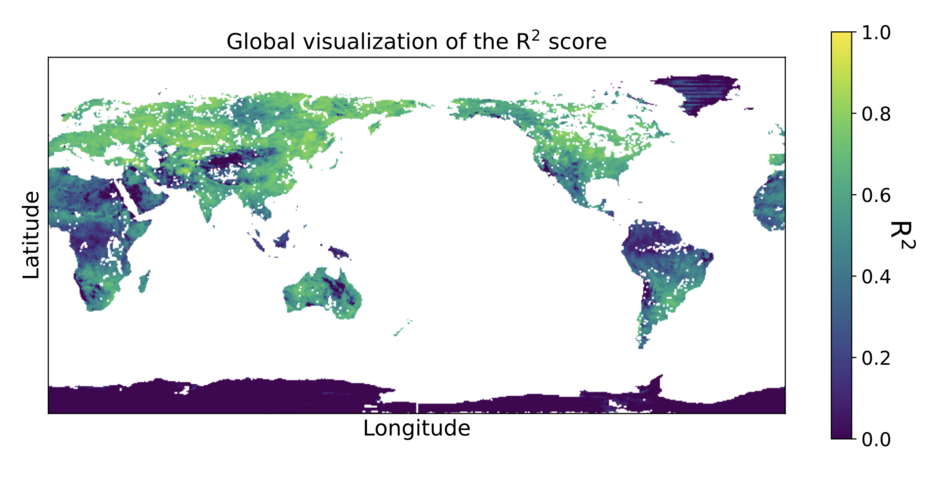

07.03.2024We are pleased to announce that our paper, Global Vegetation Modeling with Pre-trained Weather Transformers", has been accepted as a spotlight talk at ICLR 2024 at the TCCLM workshop! In this publication, we utilize recent advances in Machine Learning weather modeling to globally model vegetation activity as measured by the NDVI data. Our model is, to the best of our knowledge, the first approach that globally models vegetation activity through a single model, without using weight sharing between coordinates. As always, there is enough to do for future work -- read the paper (once it is published :) ) to learn more!

Abstract (not yet published):

Accurate vegetation models can produce further insights into the complex inter- action between vegetation activity and ecosystem processes. Previous research has established that long-term trends and short-term variability of temperature and precipitation affect vegetation activity. Motivated by the recent success of Transformer-based Deep Learning models for medium-range weather forecast- ing, we adapt the publicly available pre-trained FourCastNet to model vegetation activity while accounting for the short-term dynamics of climate variability. We investigate how the learned global representation of the atmosphere’s state can be transferred to model the normalized difference vegetation index (NDVI). Our model globally estimates vegetation activity at a resolution of 0.25◦ while relying only on meteorological data. We demonstrate that leveraging pre-trained weather models improves the NDVI estimates compared to learning an NDVI model from scratch. Additionally, we compare our results to other recent data-driven NDVI modeling approaches from machine learning and ecology literature. We further provide experimental evidence on how much data and training time is necessary to turn FourCastNet into an effective vegetation model. Code and models will be made available upon publication.