Our paper "On Background Bias in Deep Metric Learning" has been accepted to ICMV 2022

27.09.2022In this paper, we investigate if Deep Metric Learning models are prone to background bias and test a method to alleviate such bias.

In this paper, accepted to the International Conference on Machine Vision 2022, we systematically investigate background bias in Deep Metric Learning (DML) models. We propose a test setting where we replace the backgrounds of images with random backgrounds, showing that this largely decreases performance of models, depending on the dataset. We then test a method we term BGAugment, where we replace backgrounds during training, leading to much better performances in our test setting. We then qualitatively and quantitatively analyze the trained base and BGAugment models regarding the input regions they attend to. For a fair comparison, we introduce a new scoring function that allows to easily measure the amount of attribution put onto the fore- and background.

The paper will be presented at ICMV 2022, taking place from 18.-20.11.2022 in Rome, Italy.

Abstract

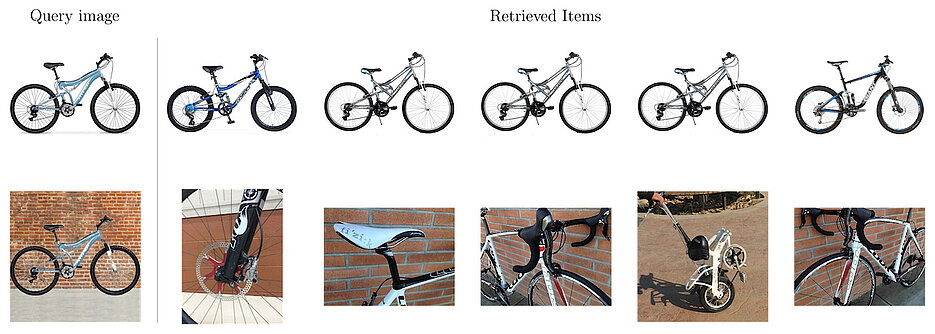

Deep Metric Learning trains a neural network to map input images to a lower-dimensional embedding space such that similar images are closer together than dissimilar images. When used for item retrieval, a query image is embedded using the trained model and the closest items from a database storing their respective embeddings are returned as the most similar items for the query. Especially in product retrieval, where a user searches for a certain product by taking a photo of it, the image background is usually not important and thus should not influence the embedding process. Ideally, the retrieval process always returns fitting items for the photographed object, regardless of the environment the photo was taken in.

In this paper, we analyze the influence of the image background on Deep Metric Learning models by utilizing five common loss functions and three common datasets. We find that Deep Metric Learning networks are prone to so-called background bias, which can lead to a severe decrease in retrieval performance when changing the image background during inference. We also show that replacing the background of images during training with random background images alleviates this issue. Since we use an automatic background removal method to do this background replacement, no additional manual labeling work and model changes are required while inference time stays the same. Qualitative and quantitative analyses, for which we introduce a new evaluation metric, confirm that models trained with replaced backgrounds attend more to the main object in the image, benefitting item retrieval systems.