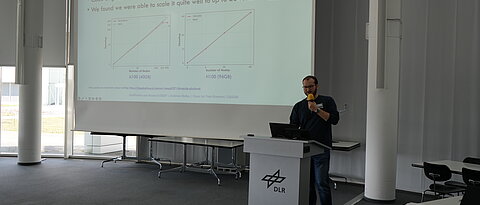

How do you build scalable, transparent language models for the German language entirely from scratch? We had the opportunity to discuss exactly that at the DLR in Ulm. The focus was on our model families LLäMmlein (120M–7B) and ModernGBERT (138M–1B), as well as the unique challenges of purely German tokenization.

Mehr